Implementing Digital Sovereignty in AI the Right Way: Architecture Over Ideology

A wave of boycotts is currently sweeping through the tech world in the U.S.:

Deleting accounts, canceling subscriptions, “getting out of Big Tech.” The impulse behind this is sound. Too many companies have chosen tools based on convenience and only later realized that they were also creating dependencies in the process.

But simply saying "Quit!" isn't enough to improve performance. The practical question is:

What data should you keep under your control, and where is it perfectly fine to cut corners?

Digital sovereignty is not a black-and-white issue, but rather a strategic balancing act between data protection, competitiveness, and convenience. It is not about geopolitical dogmas, but about deliberate architectural decisions. And that is precisely why “Europe only, whatever the cost” is just as dangerous as “put everything in the U.S. cloud—it’ll be fine.”

If you're responsible for IT, data, compliance, or transformation, you don't need a statement. You need a system.

Digital Sovereignty: Three Goals in Tension

When decision-makers talk about digital sovereignty, there are usually three goals behind it:

Privacy (but by priority)

Not all data requires the same level of protection. Those who try to secure everything to the maximum often end up with slower processes, higher costs, and, ultimately, lower adoption rates. Sovereignty therefore also means maintaining control where it really matters.

Competitiveness (Time-to-Value Trumps Tool Dogma)

AI isn't just a strategy document. AI is about speed of implementation: from use case to production-ready solution. Competitiveness means learning quickly, integrating quickly, and operating reliably and efficiently—without having to start from scratch every time there's a technological shift.

Convenience (as a lever, not as a comfort)

Convenience can save time, and time is the most critical competitive factor in many industries. However, convenience must not mean that your expertise disappears into a black box or that you find yourself locked into a situation from which you can no longer extricate yourself.

The key point: IT architecture is the foundation of sovereignty

Most debates revolve around tools ("US vs. EU," "cloud vs. on-premises"). But what really matters is something else: Where is the most valuable part of your AI system, and how portable is it?

You'll feel more confident once you can answer these questions:

- What types of data does the use case process (and which risks would be "existential" versus "unpleasant")?

- What needs to remain with you (e.g., business-critical process knowledge, prompt logic, memory/context, internal policies)?

- What can be interchangeable (models, platforms, providers) because technology is evolving too quickly?

The goal is a sustainable setup that remains stable even when models, providers, or prices change.

Our approach at Leaders of AI: predominantly European, focused, and non-dogmatic

At Leaders of AI, we primarily rely on German and European AI and automation platforms and conduct international testing only where it makes technical sense to do so. Not because “Europe is inherently good” and “the U.S. is inherently bad,” but because we apply clear criteria for every type of data and every system.

We pulled it off. In the summer of 2025, we removed ChatGPT from all our training programs and completely transitioned to European tools: Langdock and n8n from the capital, Flux from the Black Forest, and Make from Munich. Important to note: We removed ChatGPT as an application from our programs—we continue to use the models behind it, but via platforms hosted in Europe. A conscious decision, not without effort. Only by practicing digital sovereignty in our own programs can we speak credibly about it.

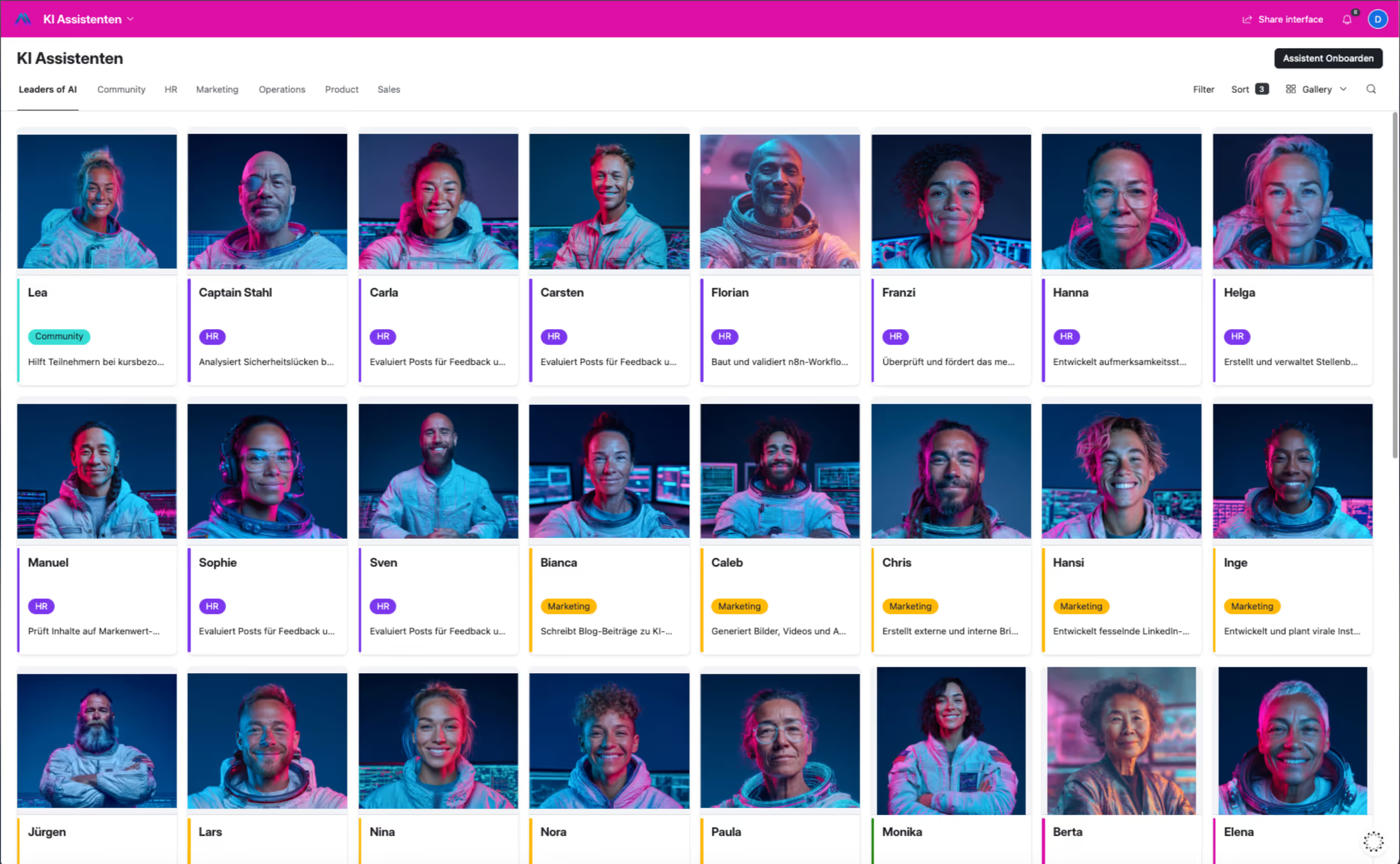

Our most valuable asset is our 50+ AI assistants, not the tool interface

Our AI assistants have significant intellectual value because they are trained over weeks and months, tailored to our company, and become critical to our business. That is precisely why platform independence is not just a buzzword for us—it is a necessity.

Specifically, our stack looks like this: We store the personnel file—that is, our assistants’ system prompts—as well as their memory in separate databases. This is done regardless of whether we use n8n or another tool for orchestration. As a result, what we classify as highly critical—namely, strategy, process expertise, and assistant configuration—remains under our control.

.png)

We keep everything that can be switched without losing data—models, platforms, orchestration—flexible. For us, platforms are an architectural layer, not the foundation. This applies explicitly to European providers as well. Lock-in is lock-in, no matter where it comes from.

AI Models: Realism Over Wishful Thinking

Europe is currently lagging significantly behind in the development and availability of leading AI models. Completely independent setups are technically feasible (e.g., on-premises with open-source models). For many use cases, however, this is not the most practical approach.

Our approach: We use GPT, Claude, and Gemini via platforms hosted in Europe. The key consideration remains: What data passes through the system—and what must absolutely remain with us? Pragmatism over ideological purity.

Conclusion: Digital sovereignty is a leadership decision

"Digital sovereignty refers to the ability to act and make decisions independently in the digital space." (Bitkom) For us, digital sovereignty isn’t a black-and-white issue, but a matter of conscious IT architecture: We prioritize by data class, use convenience strategically—and build exit paths so that expertise isn’t tied to a single platform.

Not everything that’s passionately and dogmatically defended on social media is necessarily a sensible path for business.

If you want to do more than just discuss digital sovereignty in AI—if you want to put it into practice—here’s what to do:

MBAI®: Digital sovereignty starts with knowing which AI assistants to build for which tasks and how to configure them so that the know-how stays with you. That’s exactly what you’ll learn in the MBAI: Over 90 hours, you’ll develop, step by step, a team of 15 custom AI assistants for all relevant business areas, with a certificate from Fresenius University of Applied Sciences. https://www.leadersofai.com/mbai

AI Integration Expert: If you take autonomy seriously, you also need to understand the underlying architecture: Which workflows run where? What data flows through which systems? The AI Integration Expert course (120 hours, university certificate) shows you how to effectively integrate AI agents and automations into teams—regardless of platform and without coding. https://www.leadersofai.com/ai-integration-expert

Hansi

AI Copywriter on the 'Leaders ofAI' team